The massive digitalization of everyday life has turned personal data into the raw material of a global economy worth trillions of euros, while the right to privacy is increasingly diluted between the interests of tech corporations and state surveillance tools. The classical concept of privacy, defined in 1890 as “the right to be let alone,” has undergone a radical transformation in recent decades. Today, data is a constantly contested economic asset, and privacy has shifted from a right to a negotiable commodity. This change stems from the consolidation of a business model based on the systematic extraction of personal information, in which citizens, often unknowingly, trade their data for digital services without understanding the true scale of that exchange.

Privacy is a fundamental pillar of democratic societies, as it guarantees individual autonomy, freedom of thought, and the ability to develop a personal identity without constant surveillance. Without privacy, individuals tend to adapt their behavior to what they perceive as socially acceptable out of fear of repercussions or classification, a phenomenon known as the “chilling effect” on civil liberties. This right does not only protect those with something to hide but ensures the ability to decide what information is shared, with whom, and for what purpose.

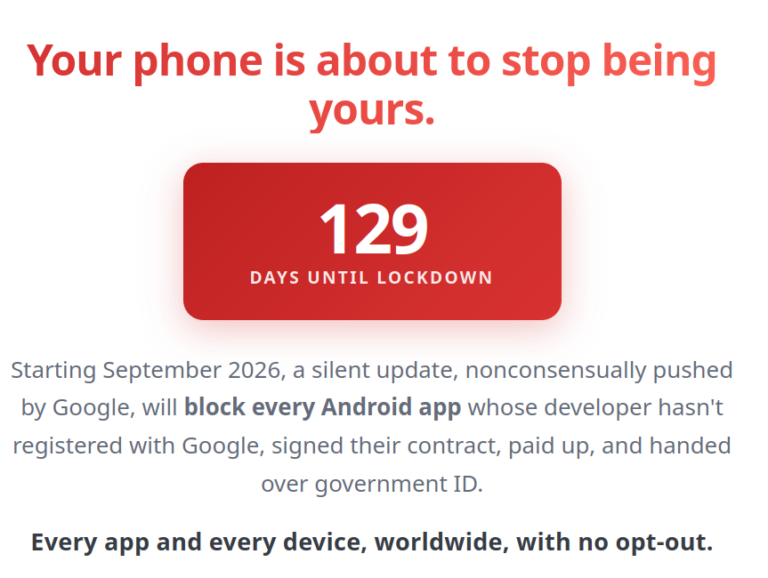

However, technological expansion has profoundly transformed this landscape. Corporations such as “Google” and “Meta” have built economic empires based on the exploitation of personal data, fueling targeted advertising systems. Every internet search, location record, and social media interaction generates a digital trace that, when processed through advanced algorithms and artificial intelligence, enables highly precise population segmentation and the prediction of behaviors, consumer preferences, and even political tendencies. A 2025 study involving nearly 25,000 participants in China also reveals a privacy gap between social classes: lower-income individuals have fewer protection tools and are more vulnerable to data exploitation, while higher education levels correlate with greater awareness of risks.

The case of “Palantir Technologies” illustrates the dangers of mass data access without democratic oversight. Founded by Peter Thiel, the company developed the “Gotham” platform, capable of building detailed individual profiles, mapping social networks, tracking movements, and identifying personal or administrative characteristics. The system combines police data with information from mobile devices and social media using artificial intelligence. The German civil rights organization “Gesellschaft für Freiheitsrechte” has warned that such large-scale assessments may violate the fundamental right to informational self-determination. The main concern is opacity: as a proprietary system, neither citizens nor public officials can audit the algorithms determining who is flagged as suspicious, undermining democratic oversight. Over 264,000 people signed a petition in Germany within a week to stop its use, while the Constitutional Court reviews its legality.

State dependence on these private tools creates a scenario where economic logic can override fundamental rights. Governments may prioritize efficiency, access to innovation, or accept conditions that place corporate interests above citizen protection. For example, they may continue using opaque or intrusive systems due to their effectiveness or profitability, even when they pose risks to rights such as privacy, equality, or the presumption of innocence. Public-private collaboration without sufficient oversight can weaken democratic safeguards.

The U.S. Immigration and Customs Enforcement agency has invested over $200 million in contracts with “Palantir,” illustrating how a corporation can become central in managing sensitive data. Meanwhile, the “Chaos Computer Club” has denounced the software as “intentionally opaque” and warned that once such companies integrate into state systems, they are difficult to remove.

In the European context, the data protection legal framework, including the General Data Protection Regulation, the Law Enforcement Directive, and the case law of the Court of Justice of the European Union, provides protection that may be more apparent than real against predictive surveillance technologies. Its application is not always clear and often requires context-specific assessments that individuals are ill-equipped to perform. Even when applicable, exceptions and loopholes allow circumvention of safeguards such as the prohibition of automated decision-making. This raises a fundamental question about whether such technologies should be developed at all. The speed of technological advancement outpaces legislative capacity, while low public awareness limits civic mobilization.

Defending privacy is one of the key democratic challenges of the 21st century. It is not about having something to hide but about maintaining control over personal information. What is at stake is not only individual privacy but the very possibility of living without constant surveillance and classification by opaque systems beyond public accountability.

References

-

Warren e Brandeis (1890). The Right to Privacy. Harvard Law Review. https://www.cs.cornell.edu/~shmat/courses/cs5436/warren-brandeis.pdf

-

Electronic Frontier Foundation (EFF) (2023). Corporate Spy Tech and Inequality: 2023 Year in Review. https://www.eff.org/deeplinks/2023/12/corporate-spy-tech-and-inequality-2023-year-review

-

Gesellschaft für Freiheitsrechte (GFF) (2024). Black box Palantir: To Karlsruhe against mass data mining. https://freiheitsrechte.org/en/themen/freiheit-im-digitalen-zeitalter/palantir-bayern

-

DW (2025). German police expands use of Palantir surveillance software. https://www.dw.com/en/german-police-expands-use-of-palantir-surveillance-software/a-73497117

-

Legal Data (2025). Spy in the police station? Why the Chaos Computer Club warns against Palantir. https://legaldata.law/en/spy-in-the-police-station-why-the-chaos-computer-club-warns-against-palantir/

-

State of Surveillance (2025). Palantir’s $287 Million Immigration Machine: How ICE Tracks Everyone. https://stateofsurveillance.org/articles/surveillance/palantir-immigration-machine-287-million/

-

Lynskey Orla (2019). Criminal justice profiling and EU dataprotection law: precarious protection frompredictive policing. International Journal of Law in Context. https://www.cambridge.org/core/journals/international-journal-of-law-in-context/article/criminal-justice-profiling-and-eu-dataprotection-law-precarious-protection-frompredictive-policing/10FD4B64364191B619FBCB864CD40A7F